Picture this: a train pierces through the early morning fog, horn choo-chooing and smoke billowing past. Lights glimmer from within as commuters sit in almost-too-cozy booths, some reading the newest bestseller, some thumbing through their phones, and yet some others type-type-typing away at their laptops. That’s me, there, the one with a steaming travel mug of coffee at the miniature desk frantically trying to get my latest R code to work. I’m on the first part of my commute, taking advantage of the coffee-shop like atmosphere and free wifi on the train, before getting onto the more bustling — and thus saved for reading — light rail and campus connector parts of my journey to work.

What do you do?

I am a postdoc research associate in the Department of Fisheries, Wildlife, and Conservation Biology, and I am working on developing modeling approaches to help the Minnesota DNR get better (i.e., more precise) estimates of moose abundance.

Ok—but what does that mean in practice? My work as a postdoc in John Fieberg’s quantitative ecology lab in the Department of Fisheries, Wildlife, and Conservation Biology keeps me constantly wrestling with several complicated data management tasks. I am often working on several coding and analysis projects at any one time, and I might have one analysis running for days or weeks, while also needing to work on other projects. I often switch between my laptop, perhaps on the train or bus, to other work-related computers or servers at home or at the office, and I also need to be able to share my entire data and coding project with my collaborators.

Ultimately, I hope to produce code and run analyses that are built within a reproducible workflow, reducing my own headaches when it comes to re-analyzing data after getting reviews back, for instance, but also in the effort to make science more transparent and accessible. Thus, I am constantly trying to learn new ways to make my day go smoothly, and have discovered some nice tricks to ease some of these big data management challenges.

What tools/software/hardware/etc do you use to do your work?

Program R with RStudio:

R is an open-source software platform for statistical computing and graphics that is quickly becoming the lingua franca among ecologists. The program is command-line driven and can be difficult to use and learn without a code editor with syntax highlighting. RStudio, at its simplest, is a free, command-line editor with syntax highlighting that works seamlessly with R. However, I would argue that the best feature of RStudio is that it allows you to create “Projects” (specifically .RProj files) that effectively package (and link) together all of your project-specific programs, code, output, and word processing documents. Packages support relative pathways between files, which obviously saves time when typing (e.g., file=“data/raw.csv” versus file=“D://Users/aaarchmiller/Documents and Settings/…/raw.csv”), but also makes it possible for you to open your RStudio Project and run the code immediately on any computer — greatly easing both collaboration and the portability of your work. Collaborating is also eased by the nice interplay between RStudio and GitHub, which I describe in more detail next. If you are an R user, making the switch to RStudio and using RStudio Projects is one of the easiest ways to improve your workflow.

GitHub:

GitHub is one of several ways to version control — or organize and backup — your work. Using GitHub, you can actually version control nearly any type of document, so it is great to use for backing up entire projects, including manuscripts, tables, figures, and code. The learning curve feels a little steep at first, but once you get GitHub running and linked with your projects, it really is as simple as saving any new work, “committing” the change with a descriptive comment, and “pushing” the committed changes to the main GitHub website. It is possible to have unlimited numbers of free, open or “public” projects (repositories) on GitHub, or you can pay a nominal fee to have hidden “private” repositories. Collaborating is easy with GitHub because any code in public repositories can be used and modified by anyone else, and then merged with your main “branch” when and if you approve the changes. You can also set up specific collaborators who can modify your code directly without the need for you to approve each change.

UofM Remote Server:

The final thing that I use daily to make my work easier is a remote server to run my main analyses, which frees up my personal laptop for writing and other tasks. The remote server is managed by the University of Minnesota Information Technology services and is effectively another desktop computer that I can connect to and run from anywhere with an internet connection. The benefit of having a remote server — other than being able to work on it from home, the train, or the office — is that it does not become obsolete as readily as a dedicated desktop computer might. Thus, money that may be traditionally used trying to buy and maintain computers for a grant-sponsored position such as mine can be used for more impactful things, such as traveling and presenting at conferences.

How does it all work together?

Now that I have explained a few of the tools I use to make my day better, I will briefly describe how everything works together in a “day-in-the-life-of” segment:

As stated above, I am currently on the train. While on the train, I work on one of my local laptop-stored RStudio Projects. After I get a program coded and tested, I save the changes with an informative commit message, such as “Updated the prior definition for shrub percent to dbeta().”

Once, I get to the office, I want to run the new program I coded, and I know that this particular analysis will take about five hours. Rather than bog down my laptop for five hours, I wish to run this analysis on the remote server. First, I push the committed changes to the main branch on the GitHub server and log into the remote server using Microsoft Remote Desktop. Then on the remote server, I open the RStudio Project and “pull” those new changes, and now I see the code that I had earlier modified on the train.

After starting that analysis, I have five hours to work on something else. Hmm, perhaps I will work on the Program R package that my advisor and I are developing. So, back on my laptop, I open the appropriate RStudio Project and “pull” the changes that have been made since I last opened it. Ah ha! My advisor has been updating these files and his most recent commit was last night at 11:23 — there he goes burning the midnight oil again! I can review his changes line by line, and see where my work needs to pick up.

Several commits, lunch, and a few mugs of tea later, and I am ready to check out the results from the analysis on the remote server. I commit my changes to the collaborative project and push them to GitHub, open the remote server again and save and push those changes to GitHub, and then pull the new analysis results over to my laptop just in time for the commute home. On the commute, I can make some preliminary graphs and build a report using the knitr() library, which I will email to my advisor when I get home later.

All in all, I’d say it’s been a productive day!

Twitter @aaarchmiller

GitHub github.com/aaarchmiller

www.chickmappers.com/althea

A note from the librarian:

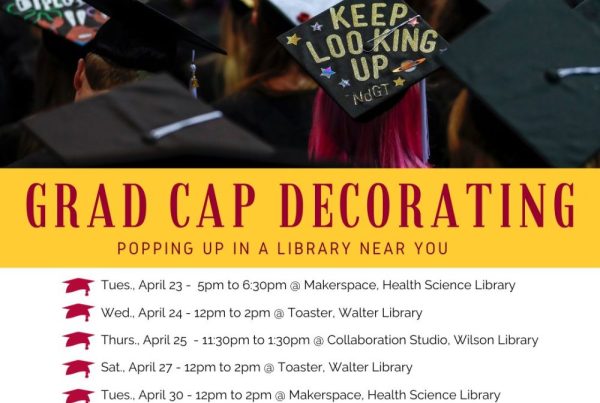

Althea has some great tips for being productive while on the move and has seamlessly integrated data management and data sharing into her workflow. More workflow ideas are available in the How I Work series and the Libraries offers numerous services related to data management and sharing.

Althea has some great tips for being productive while on the move and has seamlessly integrated data management and data sharing into her workflow. More workflow ideas are available in the How I Work series and the Libraries offers numerous services related to data management and sharing.